The Compound Effect of Split Testing: Small Tests, Big Revenue

Numbers are illustrative. Individual results will vary.

Five tests. Ten months. A site that was making $10,000 a month is now making $11,702 — a 17 percent improvement with no increase in traffic, no new ad spend, and no product changes.

And unlike a traffic spike from a campaign, these gains are permanent. Every future visitor benefits from every winning test that ran before them.

Why most people never get here

The compounding only works if you keep testing. And most people do not because the process feels slow, the individual results seem small, and there is always something more urgent to focus on.

The sites that do compound their gains are the ones that make testing a habit rather than a project. That means having a process for finding what to test, a backlog of ideas to work through, and a tool that handles the statistics so you are not babysitting results.

How to make it repeatable

Find problems continuously, not once

Use heatmaps and session replays on an ongoing basis. Visitor behavior changes as your traffic sources and content change. A heatmap from six months ago may not reflect what is happening now.

Keep a backlog of ideas

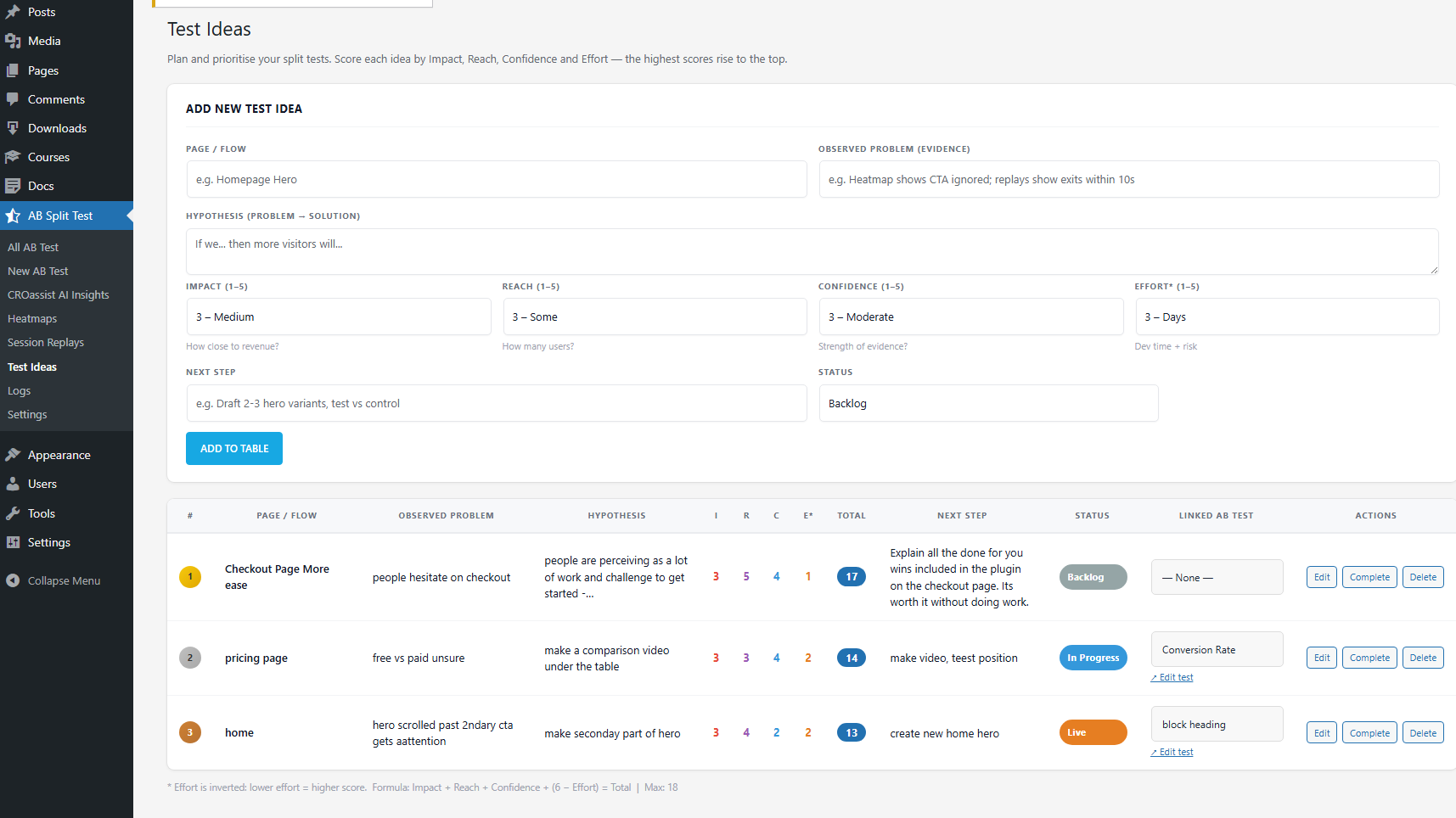

Every time you spot a potential problem in a heatmap, a support conversation, a customer email — log it. The Test Ideas feature in AB Split Test lets you score each idea by Impact, Reach, Confidence, and Effort so you always know what to run next. You never start from a blank page.

Let Autocomplete implement winners immediately

The compounding only works if you actually ship the winners. Autocomplete monitors your test as data comes in and automatically promotes the winning variation to all visitors the moment there is enough statistical evidence. The improvement goes live without you needing to log in and make a manual change.

Use the AI to keep ideas fresh

One reason testing cadences stall is that coming up with good variation ideas gets repetitive. The AI CRO Agent scans your site and generates specific test suggestions based on your actual content and audience — so you are not relying on your own creativity to keep the pipeline moving.

The losing tests do not hurt you

One thing worth saying clearly: not every test will win. Some variations will perform worse than the original. That is not a failure and it is the test working correctly.

When a variation loses, it stops. Visitors go back to the original. The only cost is the time the test ran, and even that gave you useful information about what your visitors do not respond to.

There is real long-term upside from the winners. There is almost no downside from the losers. That asymmetry is what makes consistent testing one of the better uses of time for any site focused on growth.